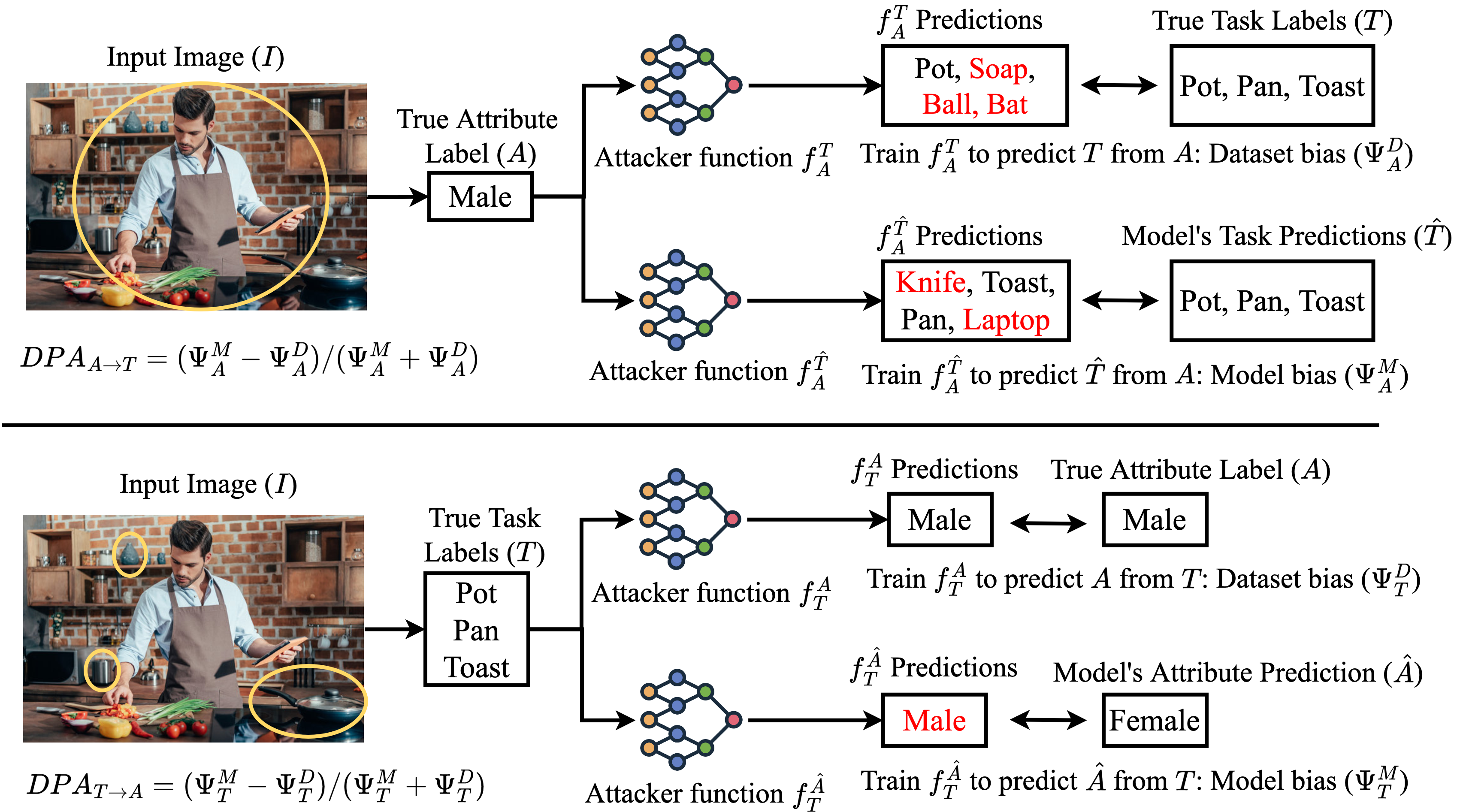

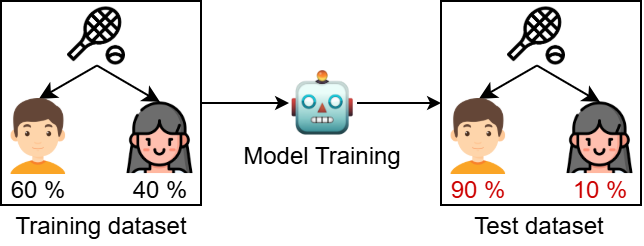

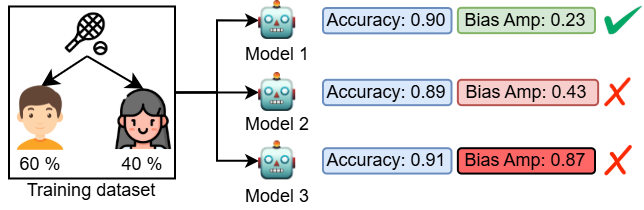

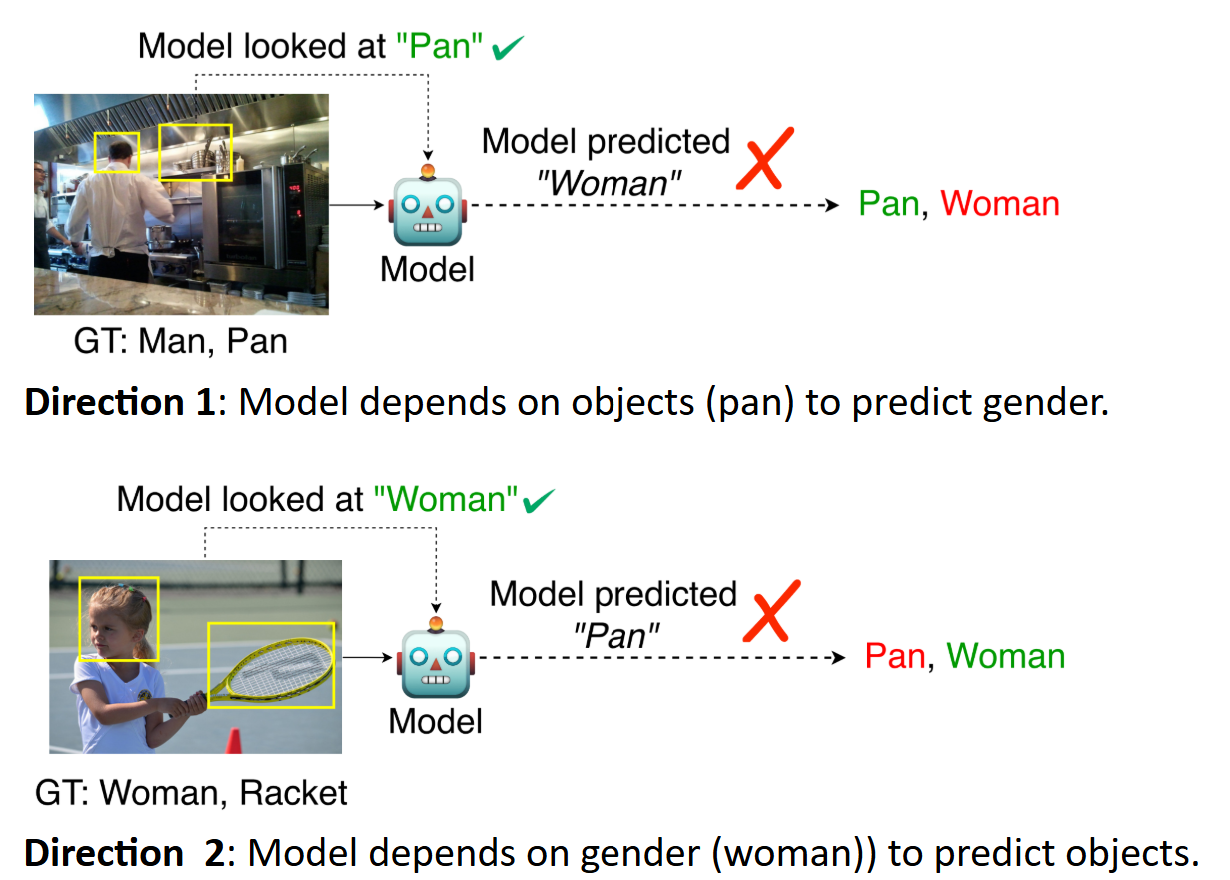

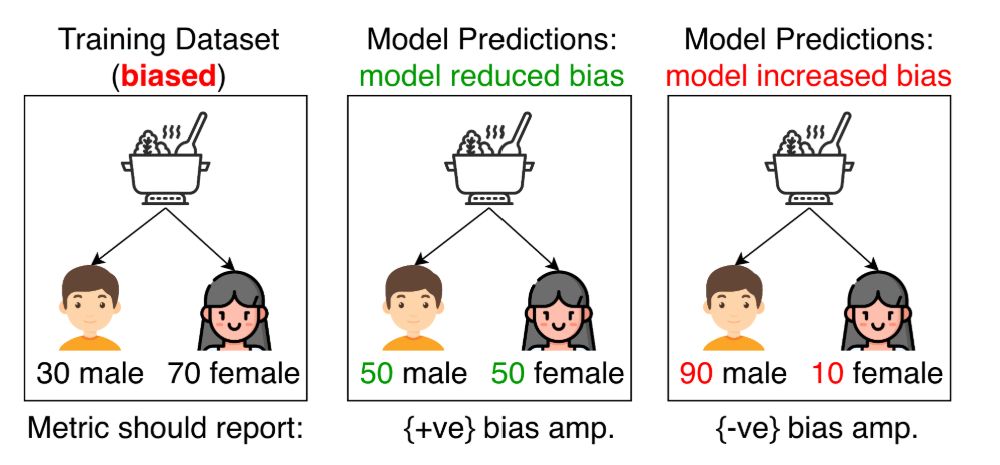

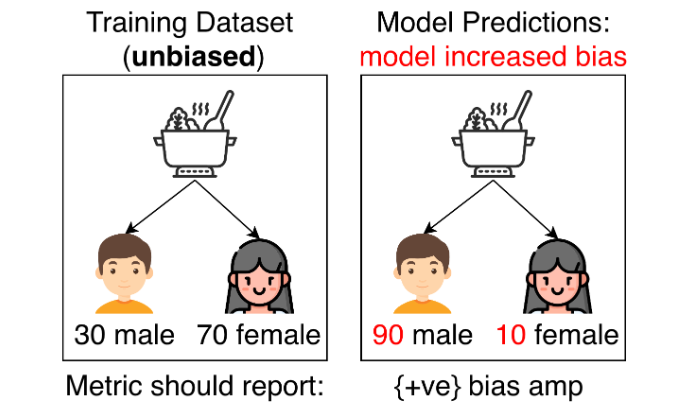

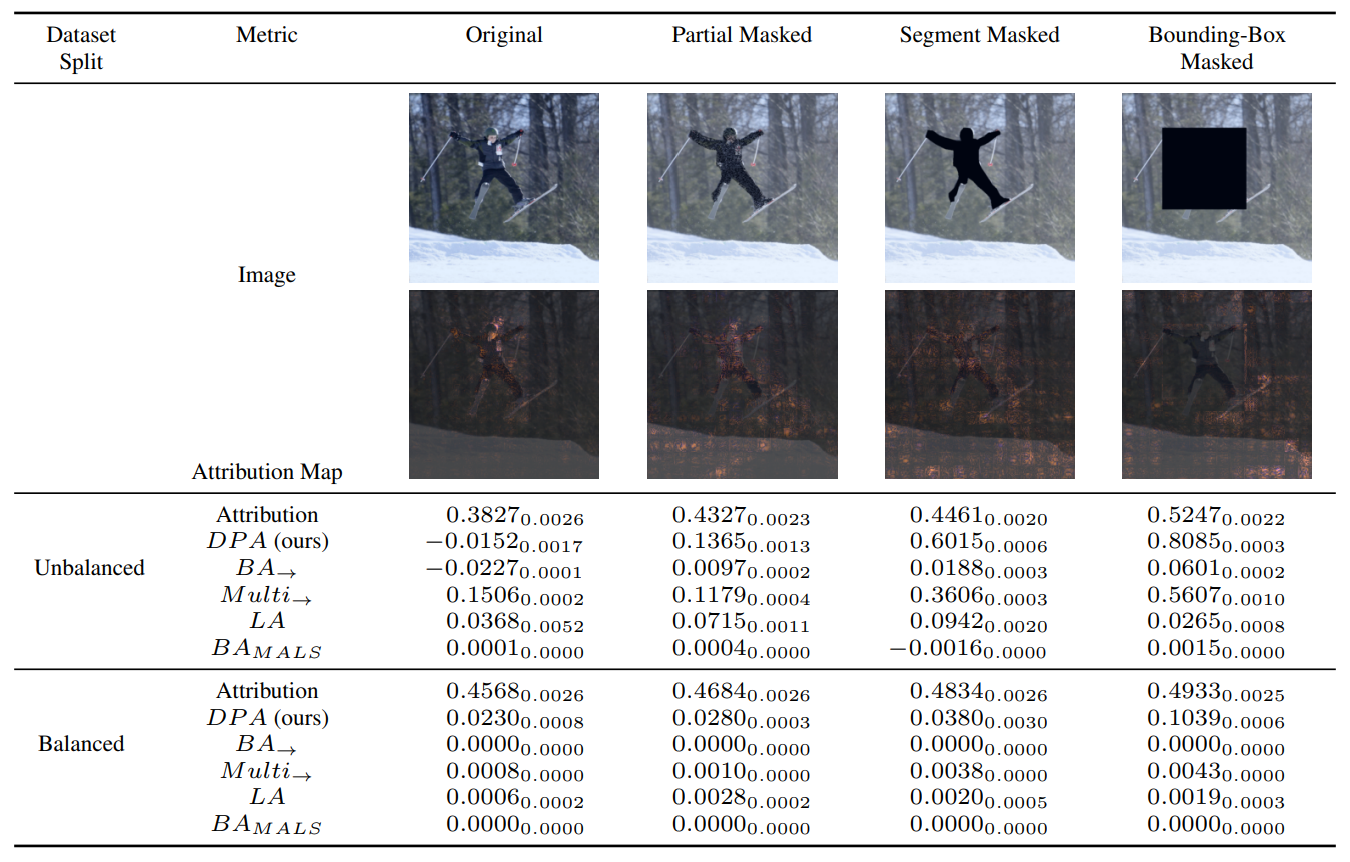

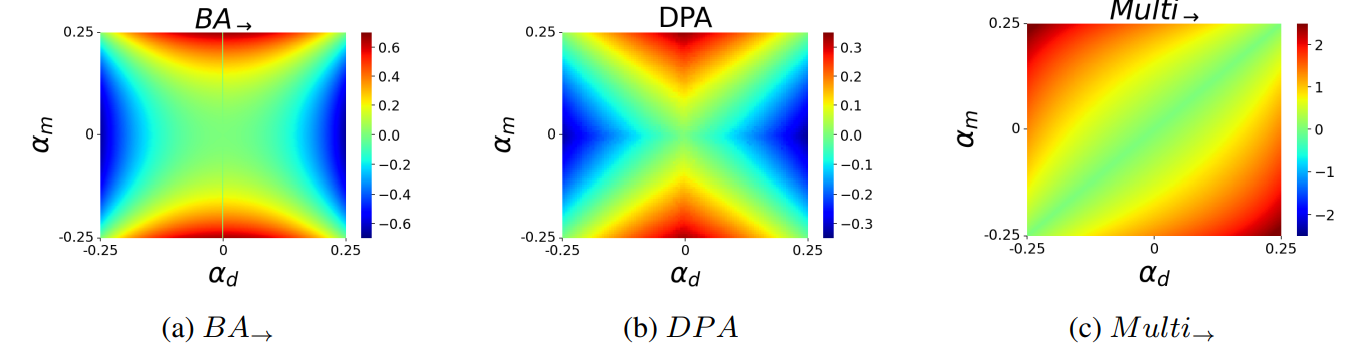

Most ML datasets today contain biases. When we train models on these datasets, they often not only learn these biases but can worsen them --- a phenomenon known as bias amplification. Several co-occurrence-based metrics have been proposed to measure bias amplification in classification datasets. They measure bias amplification between a protected attribute (e.g., gender) and a task (e.g., cooking). These metrics also support fine-grained bias analysis by identifying the direction in which a model amplifies biases. However, co-occurrence-based metrics have limitations --- some fail to measure bias amplification in balanced datasets, while others fail to measure negative bias amplification. To solve these issues, recent work proposed a predictability-based metric called leakage amplification (LA). However, LA cannot identify the direction in which a model amplifies biases. We propose Directional Predictability Amplification (DPA), a predictability-based metric that is (1) directional, (2) works with balanced and unbalanced datasets, and (3) correctly identifies positive and negative bias amplification. DPA eliminates the need to evaluate models on multiple metrics to verify these three aspects. DPA also improves over prior predictability-based metrics like LA: it is less sensitive to the choice of attacker function (a hyperparameter in predictability-based metrics), reports scores within a bounded range, and accounts for dataset bias by measuring relative changes in predictability. Our experiments on well-known datasets like COMPAS (a tabular dataset), COCO, and ImSitu (image datasets) show that DPA is the most reliable metric to measure bias amplification in classification problems.

TBA